On Democratising AI and the GPU Shortage: Part 1

White Star Capital Digital Assets Fund - Newsletter #158

On Democratising AI, the GPU Shortage and the Potential of Decentralised Training and Inference Networks

White Star Capital Digital Asset Fund - newsletter #158

By Marthe Naudts

Software may have eaten the world, but microchips digested it.

Otherwise known as semiconductors, chips are the grids of millions or even billions of transistors that process the 1s and 0s comprising that very software. Every iPhone, every TV, every email, every photo, every YouTube vVideo, every single device and every asset in our online world is powered by these tiny little switches.

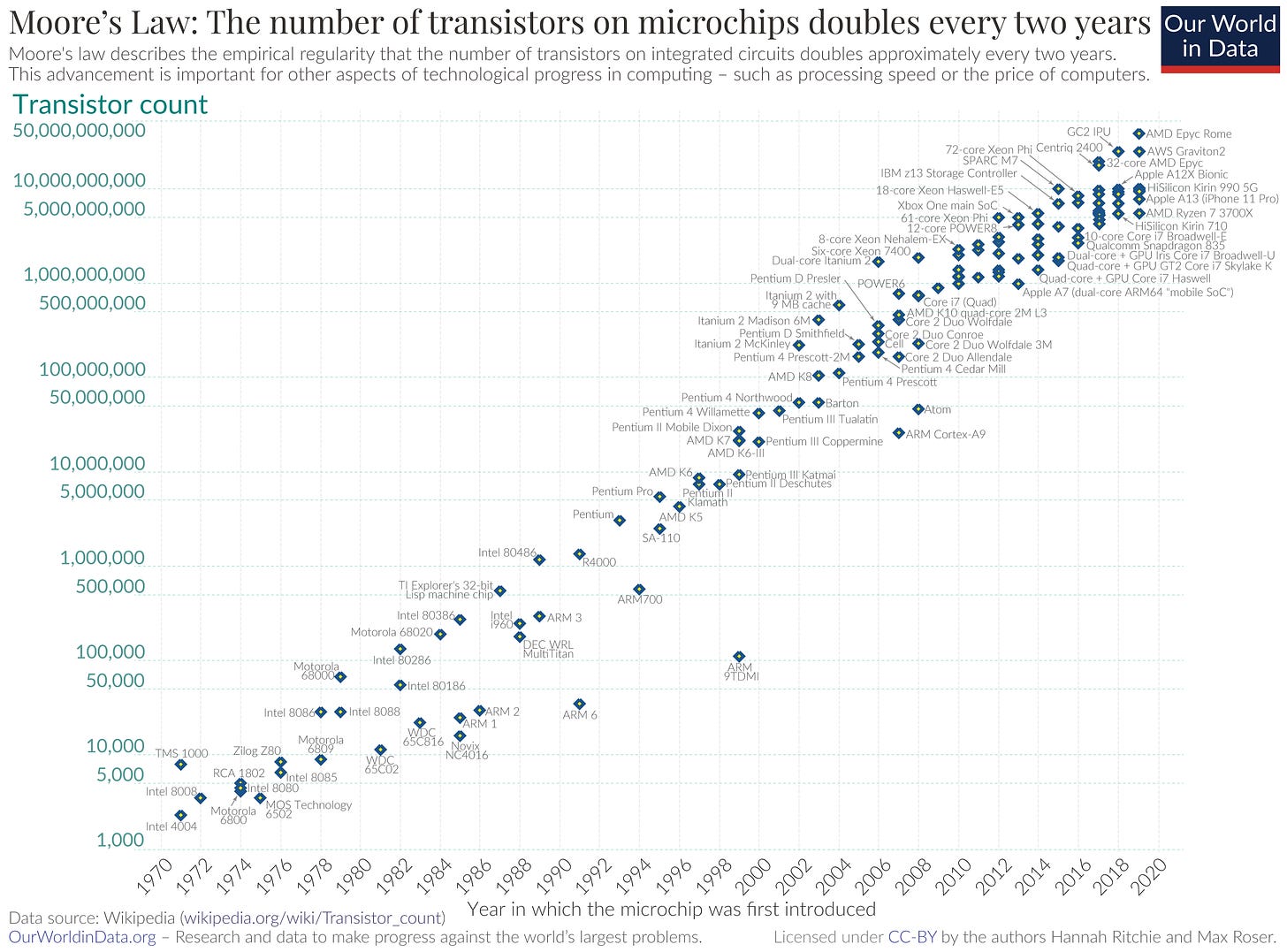

Fabricating and miniaturising semiconductors has been the single greatest engineering challenge of modern society. Both Moore’s and Rock’s Law, alongside ongoing debates about their limits, continue to drive the industry in its strive for more and more processing power.

Nvidia's H100s and A100s are the industry's latest and most powerful parallel processing GPUs, and are the main chips responsible for powering the transformer models behind the recent AI boom. Faced with both skyrocketing demand and supply bottlenecks, even hyper scalers like Amazon Web Services (AWS) and Google Cloud Platform (GCP) cannot keep up with demand.,

This leaves a multi-billion dollar opportunity for SaaS and marketplace businesses connecting disparate idle compute capacity like CoreWeave and Lambda, or more creative decentralised solutions seen in the likes of Gensyn, Together.ai and Akash.

And so we come to the crux of what I want to explore in this three-part series.

Can the challenges of securing, coordinating, and verifying disparate compute supply make this a winner-takes-all market, or is this one where many start-ups will emerge to form a superfluous middle layer, competing to secure a small piece of the fundamentally commoditised and highly divisible GPU pie?

Part 1: The State of Play

CPUs and the Von Neumann Bottleneck

For decades, as our appetite for computation exceeded what Moore's Law could deliver, engineers considered their biggest challenge to fabricate increasingly smaller transistors to increase core processing unit (CPU) processor speeds. Today, cutting-edge chips have managed to fit up to 5.3 trillion MOS transistors. 60 years ago, that number was just four.

But in recent years, as the time it takes for information to travel between chips has reduced, engineers find themselves facing a new bottleneck - the very architecture of the computing system.

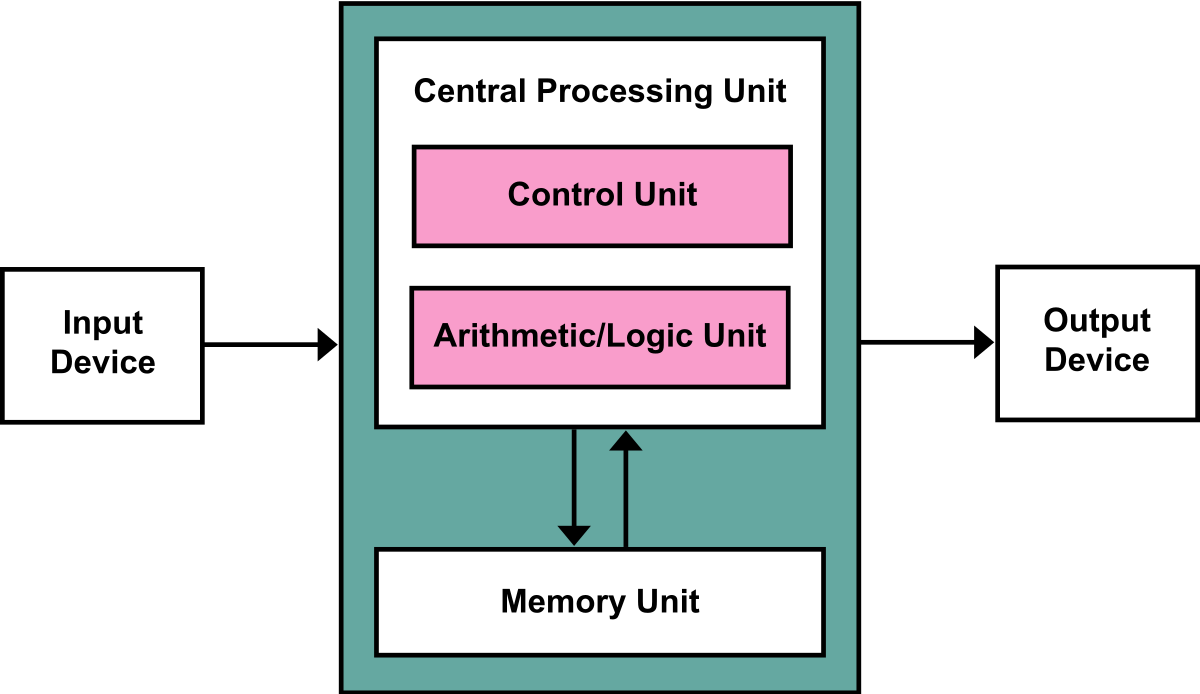

Most general purpose computers are based on Von Neumann design architecture, which, at its most simplistic, has the following components:

A processing unit (with an arithmetic logic unit and processor registers)

A control unit (with an instruction registers and a program counter)

Memory (that stores data and instructions)

Computers with Von Neumann architectures are unable to do an instruction fetch and a data operation at the same time because they share a common 'bus'. Doing an operation like 1+2 would first involve retrieving 1 from memory, then 2, and then performing the addition function on them, before storing the result in memory again. This is because the single bus can only access one of the two classes of memory at a time, so the CPU is continually forced to wait for needed data to move to or from memory. The throughput(data transfer rate) between the CPU and memory is therefore limited compared to the amount of memory. Since, as we've seen with the realisation of Moore's law over the past half-century, CPU processing speed has increased much faster than the throughput between them, this structural bottleneck has now become the primary problem. Moreover, as speed and memory continue to increase, the severity will only increase with each new generation of CPU.

No industry is this more problematic for than the burgeoning field of Artificial Intelligence (AI), which requires training models on billions and even trillions of parameters, which simply could never feasibly be processed through this CPU architecture.

GPUs and the Promise of Parallel Processing

GPUs circumvent the serial processing issue brought about by the Von Neumann bottleneck by processing calculations in parallel. Each GPU consists of a large number of smaller processing units known as cores, each of which can execute its instructions independently of the others. Nvidia, founded in 1993 by Chris Malachowsky, Curtis Priem, and, today's CEO, Jensen Huang, originally designed these for graphical workloads like 3D graphics and games, so that, unlike with Intel's microprocessors or other general-purpose CPUs, realistic images could be rendered much more quickly by determining the shade for each pixel in parallel. Today, whilst Intel and others have developed their own, Nvidia’s GPUs are particularly desirable due to their tensor cores which are more relevant for numerical rather than graphical workloads.

In 2012 in Toronto, developers of AlexNet, a convolutional neural network, realised they could use the parallel processing abilities of GPUs to train their ML model to label datasets of images. They entered and won the ImageNet Large Scale Visual Recognition Challenge, with an order of magnitude more accuracy than any other prior models, marking a turning point for the deep learning industry.

The real watershed moment, however, was a 2017 paper titled 'Attention is All You Need' written by researchers at Google wanting to improve the convolutional neural network architecture behind text translations. These traditional machine learning (ML) models used inference to expose models to large sets of labelled data to make predictions based on their understanding of that data, which works for applications where this data can be clearly defined and labelled (such as the image classification challenge that AlexNet was tackling). But, for example, translating from one language into another when the words/word order do not exactly match requires contextual understanding of the entire input. Google's researchers proposed a transformer model which turned characters into tokens and then weights them. Using an evolving set of mathematical techniques called attention, transformer models can attend to different areas of the input text to detect subtle meaning and relationships in even distant data elements in a sequential series like the words in this sentence.

Now models were able to understand written text in a significantly more sophisticated way, and could learn new tasks without large labelled datasets. Today's large language models, which need to learn the patterns and structures of an entire language in order to generate output, would be impossible using recurrent neural networks as they would process tokens sequentially, taking up a simply unfeasible amount of time and computational resources. Because the Attention is All You Need paper developed a variant with highly parallelisable components, parallel processing in GPUs worked to make these transformer comparisons possible at scale, and are the reason why LLMs and AI applications have developed to where they are today.

And, as it turns out, without even any changes to the structure these transformer-basedmodels scale very well with more data. Compared to older models, which were struggling to generate anything useful with sub-10m parameters, ChatGPT1 was trained on 120m parameters. Now this pales in comparison to GPT's 1.5bn, GPT3's 175bn, and GPT4's rumoured 1.7tn parameters.

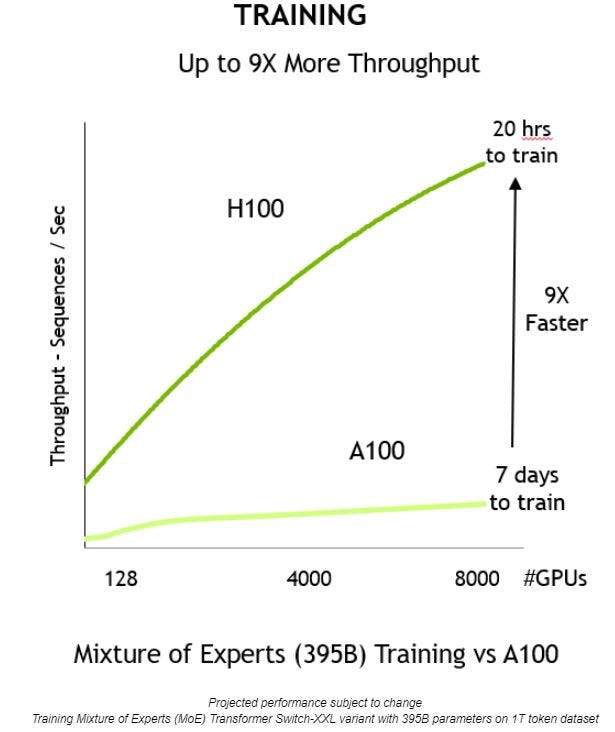

What’s powering the revolution? Nvidia's GPUs. Whilst there are other brand GPUs and chips aimed at AI workloads designed by the likes of AMD, Nvidian has little competition in the market for high-performance GPUs for training machine learning models/neural networks. Its latest and greatest GPU is called the H100, which is currently sold for c. £20-25k each (and often significantly more in secondary markets). H100s weigh 70 pounds, are comprised of 35,000 parts, and require AI to design and robots to assemble. Generally speaking, companies are buying them in boxes of 8 (8-GPU HGX H100s with SXM cards), which cost approximately £200-250k. They are 9x faster for AI training than their second fastest rival, Nvidia's A100s. It is hard to understate how much more advanced they are than any other chip on the market for LLM training and inference.

So we've landed in an exciting but precarious position, in which tech giants and start-ups alike are scrambling for the very limited set of cutting-edge GPUs and their associated CUDA software designed solely by Nvidia and produced solely by their manufacturing partner, Taiwan Semiconductor Manufacturing Co (TSMC). TSMC's fabrication facilities (fabs) are the only ones in the world currently capable of manufacturing these chips, with most fab capacity reserved over 12 months in advance. When chips are designed, they are designed and developed with a particular production process in mind, and it takes time to optimise a production process to maximise the yield. Therefore, as a chip is designed, the chip designed must establish a contract with a fab for the production of a chip, and, for now, most of those contracts are with TSMC, thereby forming a natural bottleneck to scaling supply to meet the booming demand as this requires constructing additional fabs. This is no small feat- its current planned fab in Arizona, for example, encompasses a 1,000 acre area, requiring 21,000 construction workers and a price tag of over £30bn.

Marketplaces like Lambda, Vast.ai, Gensyn, and Bittensor, therefore suggest that this supply bottleneck is a multi-billion dollar opportunity, which they can solve for by sourcing and coordinating idle GPU compute capacity in crypto mining facilities, independent data centres, and consumer GPUs. Some of these players are using blockchains to coordinate the distributed supply and/or tokens to price the marketplace.

Next week, in Part 2, I will turn to the original central question:

Can the challenges of securing, coordinating, and verifying disparate compute supply make this a winner-takes-all market, or is this just one where many start-ups will emerge to form a superfluous middle layer, competing to secure a small piece of the fundamentally commoditised and highly divisible GPU pie?

🔦 White Star & Portfolio Spotlight

Echelon chosen as the inaugural project to be supported out of the Aptos and Thala DeFi fund

Echelon will launch through Thala’s launchpad.

Project teams can now Launch a pre-sale for their token, NFT, or product in minutes with BoomFi

Teams can secure early commitments to boost success chances. Trustless escrow smart contracts hold funds, releasing them upon asset delivery or minting.

CEO of Safello Emelie Moritz spoke on Avanzapodden – one of Sweden's largest financial podcasts

Tune in to get insights on crypto investing, bitcoin ETFs and Safellos as the leading cryptocurrency exchange in the Nordics.

🏦 Enterprises & Institutions

Abra will open customer withdrawals following Texas settlement

Abra held around $13.6 million worth of cryptocurrencies for 12,000 customers, according to the settlement.

HashKey, FTSE Russell team up to offer ‘more nuanced’ crypto exposure

HashKey Capital has linked up with index management giant FTSE Russell to give investors crypto exposure beyond the industry’s major assets.

Kraken hires former N26 and Coinbase execs to help bolster compliance and global expansion

Crypto exchange Kraken announced two strategic hires on Thursday it said would help position the company for "continued growth amid evolving global regulatory environments."

Fidelity becomes second spot bitcoin ETF issuer to hit $1 billion of inflows

Fidelity’s FBTC spot bitcoin ETF joined BlackRock’s IBIT in becoming the second fund to hit more than $1 billion worth of inflows after five days of trading.

⚖️ Government & Regulation

SEC delays decision on Grayscale's proposal for spot Ethereum ETF again, with order to institute proceedings and seek public comment

The agency asked for public comments on Thursday on how to move forward with the proposed Grayscale Ethereum Trust.

Bank of England and UK Treasury remain undecided on a 'digital pound'

In the response published on Thursday, the BoE and the UK Treasury determined that it is still too early to conclusively decide whether a digital pound is necessary. However, it stated that it intends to persist in its research and design efforts for a central bank digital currency (CBDC).

Alan Howard's Elwood gets FCA nod to offer trading of security tokens and derivatives

Elwood said that the authorization related to its execution management system with respect to security tokens and derivatives. This platform lets its clients connect to crypto exchanges and over-the-counter trading venues.

Swiss fintech firm Taurus granted approval to offer tokenized securities to retail clients

With this, the Swiss fintech firm Taurus has opened its TDX trading marketplace for tokenized assets to retail clients after receiving regulatory approval Switzerland's Financial Market Supervisory Authority (FINMA).

China forms metaverse working group with Huawei, Tencent, Ant Group and others

The creation of the task force comes as the country hopes to set up industrial standards for its metaverse sector. The MIIT said in a document released in September that the formulation of basic standards such as metaverse terminology and reference architecture could be beneficial for unifying consensus among stakeholders.

[TITLE, WITH BITLY LINK]

[TEXT]

💰 Funding & Exits

Meson Network Completes Strategic Financing Round Led By Presto Labs And Announces Token Sale On CoinList

DePIN project Meson Network has completed a strategic financing round led by Presto Labs, with the specific amount of funding not disclosed.

Digital Asset Platform Web3Intelligence Raises $4.5M Ahead of New Token Rollout

The private funding round included participation from DAO MAKER, Shima Capital, and Gate.io among other investors

Sygnum raises more than USD 40 million in interim close of oversubscribed financing round

As of the completion of this interim close the company’s post-money valuation stands at USD 900 million.

Axiom, Protocol for Historical Ethereum Data, Raises $20M, Led by Paradigm, Standard Crypto

The funding will go towards further developing the protocol and adding new hires. Axiom allows smart contract developers to access historical data from Ethereum and then perform intensive computations off-chain.

Crypto Custodian BitGo Receives Investment From Iconic Cash Handling Firm Brink’s

Brink’s says it is continuing its growth in the evolving digital asset industry with the BitGo investment.

Masa raises $5.4 million in seed funding to build a personal data network on Avalanche

Masa aims to create a data platform, allowing users to contribute personal data and receive compensation in the form of Masa's native token. Developers will have access to this data for training AI models and creating applications in a manner that preserves user privacy.

Bagel Network Raises $3.1 Million in Pre-Seed Funding Round Led by CoinFund

Bagel Network aims to address data monopolies by creating a marketplace that allows data scientists and AI engineers to exchange and authorize verifiable datasets in a cost-effective and privacy-preserving manner. The project seeks to develop a decentralized data platform to support machine learning (ML) models.

Ethereum Layer 2 developer Polymer Labs raises $23 million in Series A funding

Polymer is building an Ethereum Layer 2 network to provide interoperability-as-a-service.

Ingonyama Raises $21 Million for Zero-Knowledge Proof Acceleration and Semiconductor Development

The company aims to create open-source libraries for zk proof acceleration and develop zk-focused semiconductors in the long term. The funding round was led by Geometry, Walen Catalyst Ventures, and IOSG Ventures, with participation from Samsung Next and the companies behind ZCash, Arbitrum, and Filecoin, among others.

EDX Plans Asia Crypto Exchange With Funding From Sequoia and Pantera Capital

To appeal to institutional investors, EDX built its own clearinghouse and outsourced the role of the custodian to avoid the potential for co-mingling of funds. It’s working with Anchorage Digital as its custodial partner.

Dinari Secures $10M Seed Funding, Expands Crypto Asset Offerings

The company has been making strides in expanding its offerings, particularly through the introduction of Real World Assets (RWAs) of US stocks, ETFs, and other assets, backed on a 1:1 basis on the Arbitrum One network.

Scene Infrastructure Company Raises $3 Million in Seed Funding Led by A16z Crypto

SIC will be led by Jose Mejia and Ethan Daya, who are long-term DAO contributors and co-founders of Zora. Scene Infrastructure primarily focuses on accelerating FWB software development and expanding FWB token utility.

Arcade2Earn raises $4.8 million in private token round ahead of public ARC sale

[TEXT]Arcade2Earn, a play-to-earn gaming platform that recently moved away from Solana to Ethereum and Avalanche, has raised $4.8 million in a new funding round led by Crypto.com Capital.

Mining rig maker Canaan raises $50 million in preferred shares financing

Crypto mining rig maker Canaan has raised over $50 million through preferred shares financing to enhance its research and development capabilities and expand production scale.

🚀 Project Launches & Updates

Aleo mainnet set to come within weeks with lofty goal of bringing privacy to crypto

The Aleo mainnet is set to launch in the next few weeks, once some final bugs have been squashed, in a bid to bring privacy to crypto transactions.

Synthetix deploys first perpetuals protocol on Base blockchain

Synthetix, a decentralized crypto derivatives marketplace, has deployed V3 of its perpetuals contracts protocol on Base, an Ethereum Layer-2 blockchain developed by Coinbase.

Rari Foundation launches Rari Chain mainnet on Arbitrum to help protect NFT royalties

Rari Chain is an Arbitrum-based EVM equivalent chain that preserves royalties by embedding them on the node level. It's a Layer 3, which means it boosts scaling and other features of a Layer 2, in this case Arbitrum, to augment the NFT ecosystem.

🔥 Other Bits We're Excited About

OSL executive says Hong Kong could debut spot crypto ETF by mid-year

Gary Tiu, executive director and head of regulatory affairs of OSL, a Hong Kong-licensed crypto exchange, said that the special administrative region could potentially see the issuance of its first spot crypto exchange-traded funds by the middle of this year, according to local media.

Bitcoin surpasses silver to become second largest ETF commodity in the US

Silver was previously the second-leading single commodity ETF in terms of AUM in the U.S. However, spot bitcoin ETF funds, including the conversion of Grayscale's GBTC trust, now hold approximately 647,651 bitcoin, which amounts to $27.5 billion in AUM